Technical exploration of computer vision and its role in precision work

From Blind Automation to Intelligent Vision

Robots were once blind machines—capable of precision but only within pre-programmed boundaries. They could weld, assemble, or sort items, but only if everything was perfectly placed and predictable. That era is over.

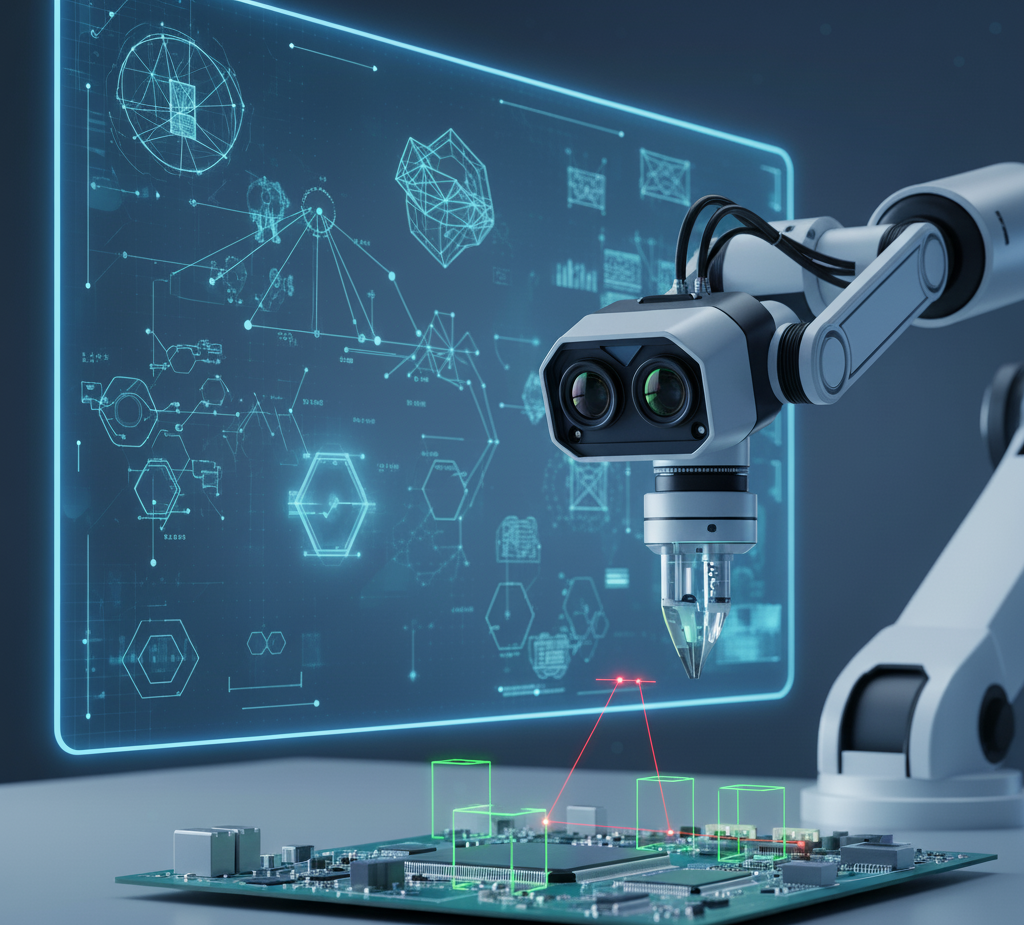

Today, vision systems have transformed robots into intelligent, adaptable machines that can see, understand, and react to their environment. Powered by computer vision, AI, and advanced imaging technologies, vision-enabled robots are performing tasks that once seemed possible only for humans—such as microchip inspection, surgical assistance, quality control, and autonomous navigation.

In modern manufacturing and beyond, vision in robotics is no longer a luxury—it’s a necessity. This article explores how robotic vision works, the technologies that power it, its real-world applications, and why it’s a cornerstone of Industry 4.0.

What Exactly Is a Robotic Vision System?

A robotic vision system enables robots to detect objects, measure features, interpret scenes, and make decisions based on visual input. It typically consists of:

| Component | Function |

| Camera / Sensor | Captures 2D and 3D images of the environment |

| Lighting System | Ensures clarity and contrast in visual data |

| Image Processing Software | Converts raw pixels into meaningful data |

| AI / Algorithms | Recognizes patterns, defects, and makes decisions |

| Robotic Controller | Tells the robot how to respond based on visual data |

This system mimics human sight and perception—but with robotic precision and speed.

How Computer Vision Works in Robotics

While traditional automation follows static programming, computer vision allows robots to interpret their surroundings dynamically. Here’s the basic workflow:

- Image Acquisition: Cameras capture images or video frames.

- Pre-Processing: Noise removal, contrast adjustments, edge enhancement.

- Feature Extraction: Detecting shapes, edges, barcodes, objects, or surface patterns.

- Object Recognition / Classification: Using AI models to identify what the robot “sees.”

- Decision Making & Action: Robot performs a task—pick, assemble, reject, align, or move.

Today’s vision systems rely heavily on deep learning, allowing them to recognize complex patterns like defects in metal, wrinkles in fabric, or micro-cracks in glass—tasks extremely difficult with rule-based programming.

2D vs. 3D Vision Systems: What’s the Difference?

| Feature | 2D Vision | 3D Vision |

| Captures | Flat image | Depth + spatial geometry |

| Best For | Barcodes, surface inspection, label verification | Bin-picking, object localization, robotic assembly |

| Cost | Lower | Higher |

| Limitations | No depth perception | Higher processing demand |

2D systems are ideal for quality checks and presence detection.

3D systems, powered by stereo cameras or structured light sensors, are essential for bin picking, object orientation, and autonomous navigation.

Core Technologies Enabling Robotic Vision

High-Resolution Cameras and Sensors

- Smart cameras with built-in processors reduce latency.

- Time-of-Flight (ToF) sensors detect distance using light pulses.

- Hyperspectral imaging captures surface chemistry—used in food processing and agriculture.

Deep Learning and Neural Networks

- Convolutional Neural Networks (CNNs) allow robots to self-learn defects and classify components.

- Models like YOLO, ResNet, and Mask R-CNN are now standard in robotic vision deployments.

Edge Computing and AI Chips

Instead of sending data to a cloud server, edge AI processors analyze visual data in real time—crucial for tasks like object picking or defect detection on a fast-moving conveyor belt.

Calibration, Sensors & Precision Optics

Robotic vision requires exact calibration between cameras and robotic arms—linking the visual world to physical workspace coordinates.

Applications: Where Vision Systems Are Revolutionizing Robotics

Quality Inspection & Defect Detection

- Electronics manufacturers use vision-equipped robots to detect defects in microchips smaller than a grain of rice.

- Automotive factories inspect welds, paint uniformity, and alignment using vision sensors.

Robotic Assembly & Micro-Precision Tasks

- Vision-guided robotic arms can insert micro-components onto circuit boards with sub-millimeter accuracy.

- Cameras ensure exact alignment, even if parts are slightly misoriented.

Bin Picking & Logistics Automation

Using 3D vision, robots can identify, locate, and pick objects from randomly filled boxes—critical in warehouses.

Medical Robotics & Surgery

Surgical robots like Da Vinci use 3D imaging and AI to assist surgeons with precise incisions and stitch placement.

Autonomous Vehicles & Drones

Computer vision enables real-time object detection, lane following, obstacle avoidance, and terrain recognition.

Continue reading: The Global Shift Toward Smart Manufacturing: Market Outlook 2026

Challenges in Robotic Vision

Despite its advancements, robotic vision is not without obstacles:

| Challenge | Description |

| Lighting Variations | Shadows or brightness can cause detection errors |

| Reflective & Transparent Surfaces | Glass, metal, and water create visual distortions |

| High Data Processing Demands | AI requires strong GPUs and real-time processing |

| Complex Calibration and Setup | Precision alignment between the camera and robot can be time-consuming |

| Cost for Small Factories (SMEs) | Advanced vision systems remain expensive to implement |

Future Trends: What’s Next for Vision in Robotics?

- AI + Vision = Fully Autonomous Robots

Robots that self-learn new tasks simply by observing human demonstrations. - Digital Twins + Vision Sensors

Real-world visual data is mirrored in a virtual environment for simulation and predictive analytics. - Vision-Guided Cobots (Collaborative Robots)

Robots that safely work side-by-side with humans using 360° vision and gesture recognition. - Vision Systems in Agriculture

Robots identifying ripe fruit, detecting disease on leaves, or monitoring livestock in real time. - Edge AI and 5G Integration

Faster data transfer means near-zero reaction time—perfect for high-speed production lines and autonomous vehicles.

Continue reading: Consumer Robotics: Solutions You Can Use Today

Seeing the Future of Automation

In the world of Industry 4.0, robots that see are robots that understand. Vision systems are not just add-ons—they are the bridge between mechanical precision and intelligent decision-making. From factories to hospitals and warehouses to highways, computer vision is redefining what machines can do.

The future of robotics is not just automated—it’s perceptive, adaptive, and deeply visual.