Walk into most manufacturing plants today, and you’ll find something quietly remarkable: machine data that has been generating for years—sometimes decades—that nobody is reading. Temperature curves. Vibration signatures. Cycle time logs. Power draw patterns. The data exists. It’s stored. It may even be timestamped and labeled. And yet, the vast majority of it has never been used to make a single operational decision.

This is not a technology problem. It is an attention problem—and it has a solution that costs nothing to start.

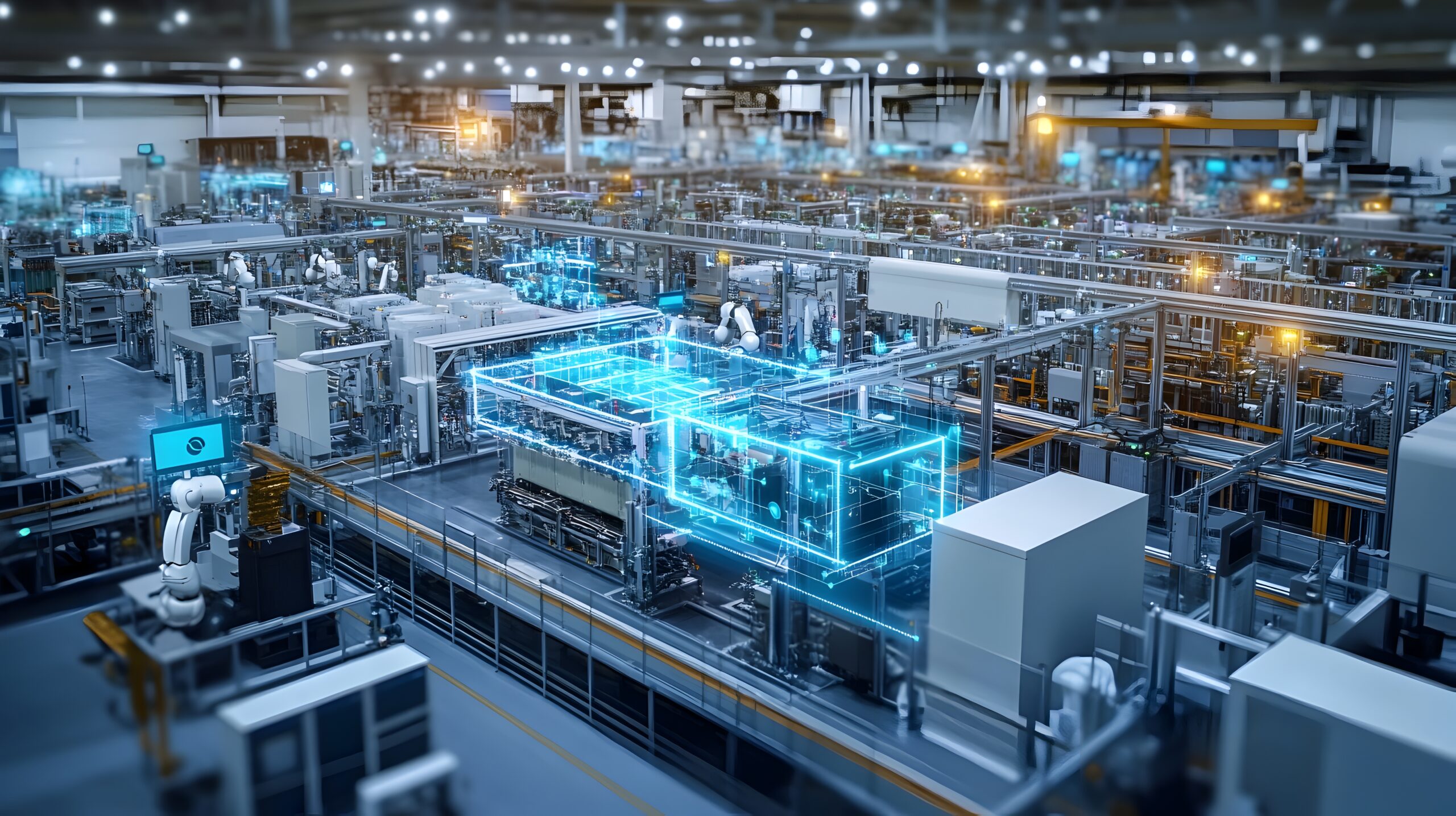

The premise of the smart factory has always implied massive investment: new IIoT hardware, cloud platforms, AI subscriptions, systems integrators, and years of integration work. That may eventually be the right path. But there is an overlooked first chapter that most manufacturers skip entirely: the systematic extraction of value from data they already own. This article is about that chapter.

| Further Reading |

| → How Digital Twins Optimize Production, Quality, and Uptime |

| → What Is a Smart Factory—And What It Actually Means for Manufacturers |

The Machine Data Is Already There—You’re Just Not Using It

Most industrial equipment manufactured after the mid-2000s has onboard data logging capability. PLCs (Programmable Logic Controllers), SCADA systems, CNC machines, servo drives, and even older DCS (Distributed Control Systems) platforms generate streams of operational data as a byproduct of their normal functioning. The problem is not collection—it’s retrieval, contextualization, and action.

In a typical mid-size plant, this machine data sits in one of three places:

1. On the PLC itself

Most PLCs store event logs, fault histories, and operational counters internally. Many are set to overwrite this data cyclically. Almost none of it is reviewed unless something breaks.

2. In the SCADA historian

SCADA platforms like Ignition, FactoryTalk, or WinCC often include a process historian that logs tagged data at configurable intervals. These historians can contain years of process data—and are frequently queried only during incident investigations.

3. In the MES or ERP system

Manufacturing Execution Systems and ERP platforms capture production orders, downtime codes, quality inspection records, and labor hours. This data is typically used for reporting—not for operational intelligence.

The question is not whether this data is being collected. It almost certainly is. The question is whether anyone has looked at it with the right lens.

| Key Insight A 2023 survey by LNS Research found that fewer than 30% of manufacturers systematically analyze the operational data already stored in their existing systems. The other 70% are, in effect, operating with a blindfold on. |

Five Ways to Extract Value Without Buying Anything

The following are concrete, low-cost starting points that any operations or engineering team can pursue using tools they likely already have access to.

1. Mine Your Downtime History

Every modern SCADA and MES system logs downtime events—usually tagged by machine, shift, and fault code. If yours does, you are sitting on a root-cause analysis goldmine that has never been properly excavated.

Start by pulling three to twelve months of downtime records and aggregating them by fault code, machine, and time of day. You will almost always find that a small number of fault types account for a disproportionate share of lost production time. This is not a new observation—it is a direct application of Pareto’s principle—but it is one that most plants have never formally applied to their own data.

Action: Export your downtime log to Excel or Power BI. Build a simple pivot table sorted by total minutes lost per fault code. Focus your maintenance attention on the top three causes. No new software required.

2. Build a Baseline from Your Historian

Process historians record thousands of data points every minute—temperatures, pressures, flow rates, motor currents, cycle times. Most plants use this data reactively: pulling it up after a quality failure or equipment incident to understand what went wrong.

The insight that almost nobody pursues: use that same historian data to define what ‘normal’ looks like. Pull machine data from your best-performing production windows—highest throughput, lowest defect rate, fewest stoppages—and build a statistical baseline. Then compare current performance against that baseline in real time.

This is the conceptual foundation of anomaly detection, and it does not require a machine learning platform to get started. A rolling average and standard deviation, tracked in a historian trend view, will catch the majority of developing issues before they become failures.

| Real-World Example A plastics extruder found that barrel temperature deviation greater than ±3°C from the historical mean during the first 20 minutes of a production run predicted a dimensional quality failure 78% of the time. They identified this using two years of historical data and a single Excel regression analysis. Zero new hardware was purchased. |

3. Correlate Quality Machine Data with Process Variables

Quality inspection data—whether from manual checks, CMM machines, or inline gauging—is typically stored in the MES or a standalone SPC (Statistical Process Control) system. Process variable data lives in the historian. These two datasets are almost never joined together.

When you do connect them, the results can be striking. By simply joining quality rejection timestamps with historian process data from the same time window, manufacturers have identified specific process conditions—a particular combination of temperature, feed rate, and humidity—that consistently produce out-of-spec product.

Action: Export a six-month quality rejection log with timestamps. Pull historian data for the same period for your key process variables. Join the datasets on timestamp in Excel, Python, or any BI tool. Look for process conditions that cluster around rejection events.

4. Analyze Your OEE Calculation—Not Just the Number

Overall Equipment Effectiveness (OEE) is the most widely reported metric in discrete manufacturing, and the most widely misunderstood. Most plants track OEE as a single number. Very few decompose it systematically into its three components—Availability, Performance, and Quality—and track each component’s subcomponents over time.

Availability losses tell you where equipment is failing. Performance losses tell you where it is running slower than its rated speed. Quality losses tell you where it is producing defective output. Each of these has a different root cause and a different remedy. Aggregating them into a single OEE figure and comparing it month-to-month obscures all of this signal.

If your MES or OEE software already captures the data to calculate OEE, it almost certainly also stores the underlying data needed to disaggregate it. Start there.

5. Listen to Your Energy Data

Energy consumption is one of the most underutilized diagnostic signals in manufacturing. Motor current draw, compressed air consumption, and utility metering data—already collected by most modern facilities for billing purposes—contain a remarkable amount of information about equipment health and process efficiency.

A motor running 8% above its baseline current draw is likely experiencing increased mechanical load: a sign of bearing degradation, misalignment, or product buildup. A compressed air system that shows consistent overconsumption on second shift is probably leaking somewhere—and that leak can often be traced using nothing more than a consumption trend chart and a systematic walkdown.

Action: Pull monthly energy sub-meter data for your major assets. Plot it against production volume to normalize for output. Any asset showing a rising energy-per-unit trend is telling you something important.

Why Machine Data Is Being Ignored: The Real Reasons

If this data is available and the value is real, why isn’t it being used? The honest answer is that it is not one reason but several, compounding each other.

The first is format fragmentation. Historian data is in one proprietary format. MES data is in another. Quality data is in a third. Joining them requires either integration work or manual export—neither of which anyone has been assigned to do.

The second is ownership ambiguity. IT manages the historian. Operations manages the MES. Quality manages the SPC system. No single person or team has both the access and the mandate to analyze machine data across all three.

The third is the absence of a structured question. ‘Use our data better’ is not an actionable brief. ‘Find the top three causes of second-shift downtime and quantify their impact on monthly output’ is. The analysis almost never happens because no one has translated the vague intention into a specific analytical question.

These are organizational problems, not technological ones. And they are solved not by buying a platform, but by assigning ownership and defining questions.

| Practical Advice Before any digital transformation investment, appoint one person—a process engineer, a continuous improvement lead, a data-capable operations manager—whose explicit role includes asking analytical questions of existing production data. This single act will do more to extract value from your current data infrastructure than any software purchase. |

When You’ve Exhausted the Free Upgrade: What Comes Next

This approach has limits, and it is worth being honest about them. You will reach a point where the questions you want to ask require data that you are not currently collecting. A predictive maintenance model for a critical bearing requires vibration data at frequencies your existing sensors cannot capture. A real-time quality feedback loop on a high-speed line requires latency that your historian’s polling interval cannot support. That is the moment to invest in new instrumentation.

But that moment comes later than most vendors would have you believe—and it arrives with much better clarity about what you actually need to buy, because you have already learned what your existing data can and cannot tell you.

The manufacturers who skip this step and go straight to new hardware often end up with the same problem they started with: lots of data, and no clear analytical framework for using it. The technology changes; the underlying question remains unanswered.

The smarter sequence is: first, extract maximum value from existing data. Second, identify the specific analytical gaps that existing data cannot close. Third, invest precisely in closing those gaps. This approach costs less, delivers value faster, and produces a far more coherent technology roadmap.

The Smart Factory Starts with Asking Better Questions

The narrative around Industry 4.0 and smart manufacturing has, understandably, been dominated by technology. New sensors, digital twins, AI-driven process control, edge computing—these are genuinely powerful capabilities, and they will define the competitive landscape of manufacturing over the next decade.

But the foundational capability that all of those technologies depend on is not a piece of hardware. It is the organizational habit of asking systematic questions of production data and acting on the answers. That habit does not require investment. It requires intention.

Your machines have been trying to tell you something for years. The cost of listening is zero. The cost of not listening is everything you’ve been leaving on the table.